February 11, 2021

Speech Analytics – Results From Our Demo

During the last three-plus years, here at Call Criteria, we have been running a unique speech analytics software. One of the most common questions that we receive is how much the software can increase QA accuracy. In this article, we will run through our results and show you how much difference certain things like Syntax tuning can help your call center.

The first thing that you should understand is how NLP (Natural Language Programming) works. We have already got an article about that, and if you would like to learn more, click here. The link will open in a new tab so that you can come back to this article after.

Managed Speech Analytics

One of the primary factors that set Call Criteria apart from the other QA companies is the fact that we include managed speech analytics. The majority of QA companies will only complete the Quality Assurance that you want them to, and they will leave you requiring a QA team to work out your own keywords, phrases, and requirements. However, Call Criteria will complete managed speech analytics for you, too.

What that means is that we have our speech analysts who work hard to gain a complete understanding of your products. Therefore, there is no requirement to have any analysts in your business unless you specifically want to. The only people that you will have to train unless you have the skill-set in your company already is building syntax lists that are specific to your business.

However, this is something that we may be bringing into our services in the future, so contact us if this is something that would be a deciding factor on which company you pick for your QA.

How Does Call Criteria’s Speech Analytics Help You?

When you have an automated speech analytics system that detects keywords for you, they will also automatically mark a question correct or incorrect based on those words heard or not. While that is not a new thing, it is often a difficult and challenging task to input the keywords. Call Criteria have simplified the process of keyword building by utilizing a human review aspect to assist you.

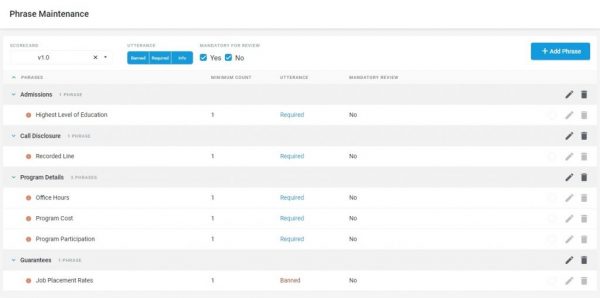

Before we get into the specifics of how human reviews help you more than data input alone, we will look through how you can build your own keyword lists. Within the dashboard, you have the option to use a toolbox. In this toolbox page, there are many different options. However, the one that we are looking at today is the keyword maintenance:

Not all businesses and call centers will want the same keywords, but the principle is the same no matter what your requirements are. Therefore, this article is based around the demo that we have completed to show you what is accomplishable.

In the image above, you can see that different required phrases within different sections appear on the scorecard that I have selected.

Selecting Initial Syntax

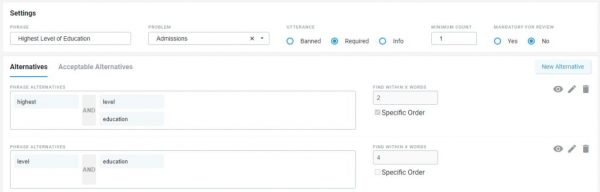

The first thing that you need to do when you are building out your keywords is to set out your initial syntax. That is the most basic phrase that you think that you require. For example, we will use the Highest Level of Education. That is because it is something that has a more extensive range of words that you can use to obtain the same result, and it is not a verbatim required statement, as a recorded line statement would be.

In the screenshot above, you can see that the person who entered the syntax started with the most basic form of requirement. The system needs to hear: Highest followed by either level or education.

Furthermore, it has to be in that specific order, visible by the checkbox to the right. You can also see that the syntax programmer wants to see those two words together, as indicated by the “find within x words” box.

Starting your syntax building in this way is common, and the easiest way.

Furthermore, you may also think that the way that your call center agents will say this phrase will always be verbatim. If it were a statement said in a specific order, on every call, then you would expect to see it get a 100% detection accuracy.

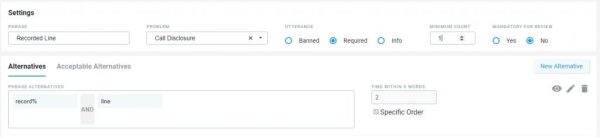

If you think that this may only be an issue for specific phrases, then, unfortunately, you would be wrong. As another example, we will look at a call disclosure phrase.

When you expect an agent to say “recorded line” within the call, then you would start with a syntax build, as you see above.

The % symbol after the word record is a wildcard. What that means is that providing that there is a word that starts with record, it can end with any other suffix—for example, ed, ing.

The last example we would like to give you is an “office hours” phrase.

The above phrase is a little more involved at the outset. The reason for that is because you may be looking for any of the words in the first column with any from the second and third. Again, there is the wildcard of class%, meaning it could have any suffix such as classes, classrooms, or just class.

The Problem

Phrases such as “highest level of education” and “office hours” are not statements and more of conversational phrases that may appear in various ways. So, what we did, was to run our initial syntax, as you see above, through the Call Criteria QA system. The system includes human analysts who listen to every call and independently score them based on the scorecard requirements, not on the syntax programming.

Here are the results of 609 calls based on the syntax from above that our QA team have analyzed:

- Highest Level Of Education. – 212 calls (34.8%) incorrectly flagged as not found.

- Call Disclosure. – 23 calls (3.78%) incorrectly flagged as not found.

- Office Hours. – 70 Calls (11.5%) incorrectly flagged as not found.

Given this result, we know that the syntax programming in the simple form as above is not good enough.

However, the only way that we at Call Criteria know that these were incorrectly flagged as missed is because of our human QA element. If we did not have those, you would have lower KPIs and worse results than they should be.

The Solution – Syntax Tuning

Call Criteria have a unique system to deal with this issue. All of our QA analysts are trained to identify acceptable alternatives to the required phrases, which is how we know the percentage of calls that were incorrectly marked as missed.

On the other hand, our QA analysts also check for points that the Speech Analytics has marked as correct, which in fact, were missed. That can happen because of similar-sounding words in the same order.

There are three main ways in which we can complete syntax tuning:

- Using alternative words. – Sometimes agents will use words that mean the same thing as the syntax phrase, but they will not be picked up by voice analytics if the only syntax it is looking for is the highest level of education. For example, the agent may ask, “what is your education background?” Here, we can see that the question would fit into obtaining their highest level of education, but with entirely different words and orders.

- Using entirely new ways of saying something. – Sometimes, a prospective customer may tell you the answer to a question without the question being asked. Therefore, you would not need to ask the question. In that case, you are likely to miss a point on a scorecard because the specific words were not said, but there was no need to lose that point.

- Identifying mistranscribed words. – Unfortunately, wherever there is a requirement for transcription, i.e., any speech analytics, there will likely be some mistranscription. That will result in false positives/negatives.

How Does Call Criteria Help With Syntax Tuning?

Many call center QA companies will require you to sit through the calls to identify the missed but acceptable alternatives within the transcript. However, as you have already seen above, Call Criteria QA analysts will do that for you. They will list all of the acceptable alternatives that they find while listening to a call.

Identification

The significant benefit of having human QA elements is that you will find some phrases that you would never think about looking for that would still be acceptable alternatives. Having that list will allow you to look through it and add in any words, in any order that you like to the keyword syntax.

Recording

Knowing that you have human analysts listening to the calls, and recording any of the acceptable alternatives means that you can have a lot less upstart time to your speech analytics for any new projects or scorecards. For example, if you have 100 calls per day, and 50% of those use different phrases, you could have 50 alternatives as soon as your QA has been completed. All you need to do then is to add the words and phrases into your syntax.

Using those alternatives, our resident speech analyst spent around an hour building out the syntax keywords for the highest level of education point and came up with three pages of other options.

Furthermore, he updated the call disclosure statement to four whole alternatives, with different keywords in them. Some of them are aliases or mistranscribed words, such as reported, instead of recorded. (If the sentence transcribes as: “this call is reported,” it is likely that it was a mistranscription of the word recorded.)

Finally, the office hours words were updated because sometimes, the terms are further apart in a conversation than initially thought, or different words got the same response. That syntax got updated to five various alternatives, all with many words in there.

Now you can see that there are many different options for words that would qualify as a possible alternative, thus giving your speech analytics a better chance of providing you with accurate results.

The Results Of Call Criteria Syntax Tuning Using A Human Review Element

Using the human review element to tune the syntax, we have been able to create a drastic improvement on the results that we showed you in the “Problem” section further up the page. We ran the same 609 calls through the speech analytics and then passed them to the human reviewers for clarification – here are the new results:

- Highest Level Of Education. – Only 23 calls (3.78%) incorrect compared to 212 calls (34.8%) before tuning.

- Call Disclosure. – Only 2 calls (0.33%) incorrect compared to 23 calls (3.78%) before tuning.

- Office Hours. – Only 16 calls (2.63%) incorrect compared to 70 Calls (11.5%) before tuning.

All of these improvements were gained through less than one-hour per phrase. Furthermore, the updated acceptable alternatives will continue to be produced, meaning that you can further fine-tune the syntax as time moves forward.

FAQs

Does the Speech analytics automatically redact sensitive data (credit card details, social security numbers, driving license, etc.) off the shelf?

All personal details can be redacted by either the length of a number or numbers entirely from either transcript or audio calls. Other information can be redacted if required.

Do you get false positives by choosing lots of alternative words? (could you narrow down word selection so much that you broaden it?)

In theory, yes, you can get false positives if you add too many keywords into the syntax. However, we advise that you use the success criteria percentage. That could be that if you get 85-95% of success, it is ok. If you go too much above that, then you may need to look at which words are being picked up incorrectly. However, that is not something that you usually come across, unless you have a lot of similar words to look for. The best way to validate the scores is by using our human review for precision.

Conclusion

In conclusion to the demo that we have completed, there are a few very simple take-aways:

- The more human review you have, the more you can fine-tune your speech model. The better the speech model, the less human review you need.

- Using the human review, along with syntax tuning, ensures that it is quicker to get your program off the ground and maintain high accuracy.

- You will get a higher performance that will consistently get better the more that you use and tune it.